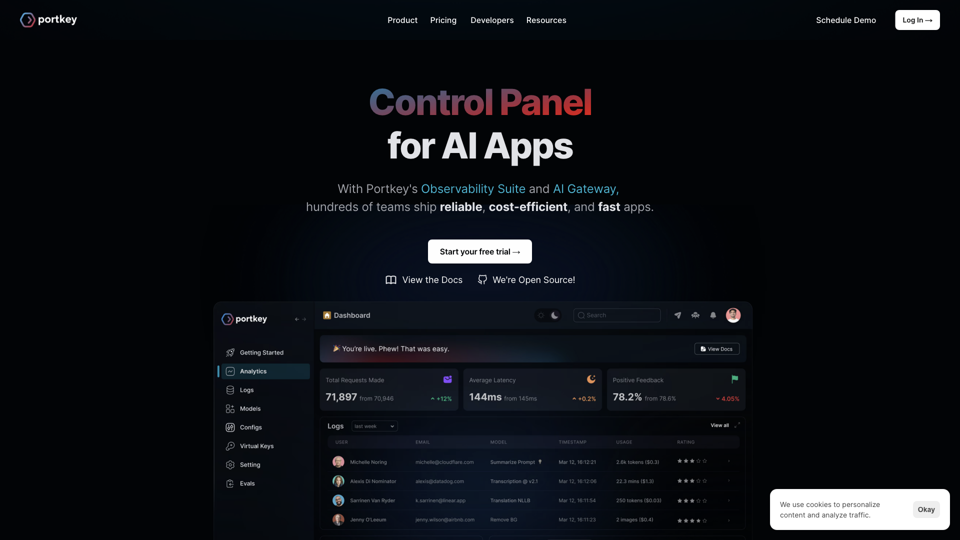

What is Portkey.ai Control Panel for AI Apps

Portkey.ai is a comprehensive control panel designed for managing AI applications. It provides a suite of tools that enhance observability, reliability, and efficiency in deploying AI models. With features like the Observability Suite, AI Gateway, and Prompt Playground, Portkey enables teams to monitor, route, and optimize AI interactions effectively.

Features of Portkey.ai

-

Observability Suite: Monitor costs, quality, and latency with insights from over 40 metrics. Debug with detailed logs and traces to ensure your AI applications run smoothly.

-

AI Gateway: Route requests to over 100 language models (LLMs) reliably. Set up fallbacks, load balancing, retries, cache, and canary tests effortlessly to maintain high availability.

-

Prompt Playground: Collaboratively develop and deploy effective prompts without relying on git. This feature simplifies the process of refining AI interactions.

-

Feedback Evaluation: Collect and track feedback from users. Set up tests to automatically judge outputs and identify areas for improvement in real-time.

How to use Portkey.ai

-

Integration: Replace the OpenAI API base path in your app with Portkey's API endpoint. This step integrates Portkey into your application, allowing it to manage and route your AI requests.

-

Configuration: Use the AI Gateway to configure routing and fallback options. Set up caching and load balancing to optimize performance.

-

Prompt Development: Utilize the Prompt Playground to develop and deploy prompts. Collaborate with your team to refine AI interactions.

-

Monitoring: Leverage the Observability Suite to monitor metrics, logs, and traces. Identify and resolve issues to maintain high-quality AI services.

Pricing of Portkey.ai

Portkey.ai offers a free trial to start, with detailed pricing plans available on their website. The cost varies based on usage, features selected, and enterprise requirements. For managed hosting options, contact Portkey directly at hello@portkey.ai.

Useful tips for using Portkey.ai

-

Leverage Caching: Use the built-in smart caching to reduce latency and improve response times.

-

Set Up Fallbacks: Configure fallback options in the AI Gateway to ensure continuous operation even if primary services fail.

-

Regularly Review Metrics: Use the Observability Suite to regularly review performance metrics and make data-driven improvements.

Frequently asked questions about Portkey.ai

How does Portkey work?

Portkey integrates with your application by replacing the OpenAI API base path with its own endpoint. This allows Portkey to manage and route all your AI requests, providing enhanced control and observability.

How do you store my data?

Portkey is ISO 27001 and SOC 2 certified, ensuring secure data storage and handling. All data is encrypted in transit and at rest. For enterprise needs, Portkey offers managed hosting within private clouds.

Will this slow down my app?

No, Portkey actively benchmarks to ensure minimal additional latency. With features like smart caching and automatic failover, your app's performance may even improve.

For more detailed information or specific inquiries, contact Portkey at hello@portkey.ai.